Pixels

Today, most computers use color displays that produce images using a grid of pixels. Often each color pixel is made up of three sub-pixels—red, green, and blue—though the actual hardware may use a different pattern. Because these pixels are small and close together, the image appears continuous. Because our eyes can only directly distinguish red, green, and blue light, the screen can reproduce most of the colors we are capable of seeing.

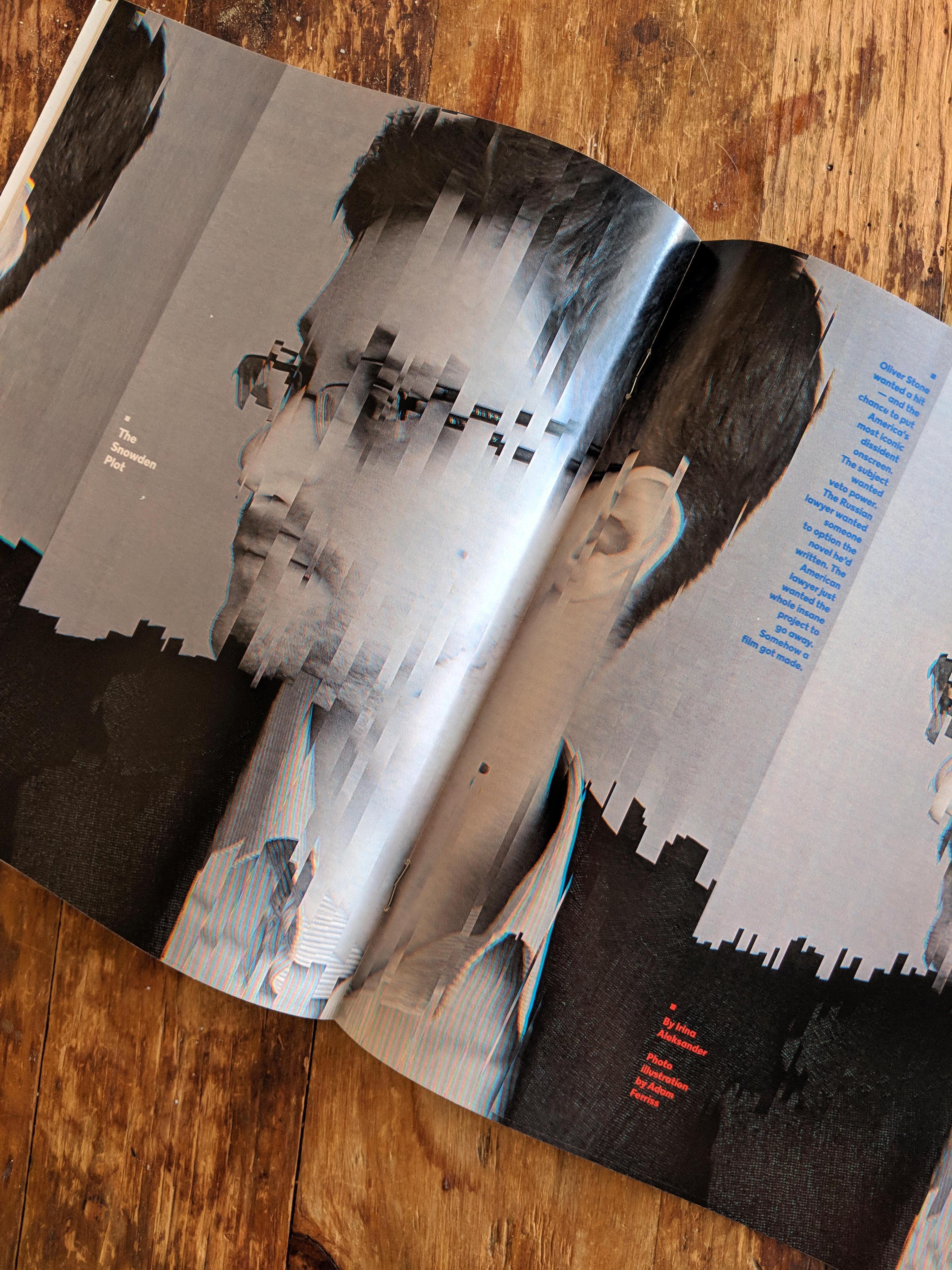

Photo by Peter Halasz

Photo by Peter Halasz

When you work with a graphics library like p5.js or the Javascript Canvas API, you don’t have to think about individual pixels. You can use high-level methods like ellipse() and image(), and the library will do the low-level work of setting individual pixel values for you. This process of drawing shapes with pixels is called rasterization. Because the p5.js drawing calls handle the details of rasterization for you, you give up some control. When you draw a circle with ellipse() every pixel in the circle will be changed to the current fill() color. The p5.js library provides no way to fill the ellipse with random colors, or a gradient, or colors generated by a function. To get this level of control, you need to take control of the rasterization yourself. This is the classic high-level/low-level trade-off: coding at a low level takes more work but offers more control.

Vector Monitors

Computer displays don’t have to use pixels at all. One of the earliest video games, Tennis for Two, used a cathode ray oscilloscope as its display. In a cathode ray oscilloscope, signals are drawn by magnetically deflecting a beam of electrons as they race from an emitter—the cathode—towards a phosphor-coated glass screen. The phosphor glows where the electrons strike it, creating an image. This type of display can show smooth lines without any of the aliasing artifacts of a pixel display.

Vector Monitors were used in later video games as well, including the Tempest arcade game and several games for the Vectrex home console.

This high/low level tradeoff has some important implications for the creative coder. When you work at a high level you are not responsible for the details. Because you are not responsible for the details you tend to think about them less. Working directly with the pixels leads to thinking about drawing with code differently.

Video Memory

Modern video pipelines are complicated, but at a basic level they work something like this: The red, green, and blue brightness values of every pixel on a display are stored in the computers RAM. In computers with a dedicated video card, this data is usually stored on the video card’s VRAM. Once per display refresh, the video hardware reads this data from memory, pixel by pixel, and sends it to the display over a display interface like DVI or HDMI. Hardware in the display receives this data and updates the brightness of each pixel as needed. If you change the values in the RAM, you will see the changes reflected on the screen.

The memory used to store the screen’s image is called the video buffer or framebuffer. Direct access to the screen’s framebuffer is pretty unusual on modern computers, and high level libraries like p5.js don’t (and can’t) provide it. But P5.js does give you access to a pixel buffer storing the image shown on your sketch’s canvas. When you call drawing functions like rect() and ellipse(), p5.js updates the appropriate values in this buffer. The buffer is then composited into the rendered webpage by the browser. The browser window is composited onto the display’s framebuffer by the operating system and video hardware. The p5.js library provides two ways to directly read and set the color of a single pixel: get()/set() and the pixels[] array. Using get() and set() is easier, but using the pixels[] array is faster. This chapter has examples for using both.

A high-definition display is 1920 pixels wide and 1080 tall: 345,600 total pixels. Each pixel needs three bytes to represent its color value: one byte each for the red, green, and blue channels. In total that is 6,220,800 bytes—about 6 megabytes—of memory to keep track of the full HD image.

Today, 6 megabytes isn’t much, but many older computers didn’t even have enough RAM to keep an entire full-color image of the screen in memory at all. These computers used lower resolutions, limited palettes, and a variety of tricks to output to screen.

Writing Pixel Data

Random Pixels Example

This example uses set() to set each pixel in a 10x10 image to a random color. This example doesn’t write to the canvas pixels directly. Instead, it creates an empty image, writes to its pixels, and then draws the image to the canvas. This approach is more flexible and avoids the complexities of pixelDensity. This also lets us draw the image scaled-up so you can see the pixels easier.

Let’s look at the code in depth.

Line 10: Use createImage() to create a new, empty 10x10 image in memory. We can draw this image just like an image loaded from a .jpg or .gif.

Line 11: Use loadPixels() to tell p5 that we want to access the pixels of the image for reading or writing. You must call loadPixels() before using set(), get(), or the pixels[] array.

Line 13: Set up a nested loop. The inner content of the loop will be run once for every pixel.

Line 15: Use the color() function to create a color value, which is assigned to c. Color values hold the R, G, B, and A values of a color. The color function takes into account the current colorMode().

Line 16: Use set() to set the color of the pixel at x, y.

Line 20: Use updatePixels() to tell p5 we are done accessing the pixels of the image.

Line 22: Use noSmooth() to tell p5 not to smooth the image when we scale it: we want it pixelated. This resembles Photoshop’s ‘nearest neighbor’ scaling method.

Line 23: Draw the image, scaling up so we can clearly see each pixel.

Gradient Example

This example has the same structure as the first one, but draws a gradient pixel-by-pixel.

Line 15: Instead of choosing a color at random, this example calculates a color based on the current x and y position of the pixel being set.

Random Access Example

The first two examples use a nested loop to set a value for every pixel in the image. The loop visits every pixel in a sequential order. That tactic is commonly used in pixel generating and processing scripts, but not always. This example places red pixels at random places on the image.

Coding Challenges One

Explore this chapter’s example code by completing the following challenges.

Modify the Random Pixels Example

- Change the image resolution to

20x20.• - Change the image resolution to

500x500.• - Change the image resolution back to

10x10.• - Make each pixel a random shade of blue.

•• - Make each pixel a random shade of gray.

•• - Color each pixel with

noise()to visualize its values.•••

Modify the Gradient Example

- Make a horizontal black-to-blue gradient.

• - Make a vertical green-to-black gradient.

• - Make a horizontal white-to-blue gradient.

• - Make a vertical rainbow gradient. Tip:

colorMode()•• - Create an inset square with a gradient, surrounded by randomly-colored pixels.

••• - Make a radial gradient from black to red. Tip:

dist()••• - Create a diagonal gradient.

•••

Modify the Random Access Example

- Change the image resolution to

50x50, adjust the code to fill the image.• - Instead of drawing single pixels, draw little plus marks (

+) at random locations.•• - Make each

+a random color.••

Start from Scratch

- Use

sin()to create a repeating black-to-red-to-black color wave.••• - Create a

512x512image and set the blue value of each pixel to(y&x) * 16.•••

Reading + Processing Pixel Data

The p5.js library also allows you to read pixel data, so you can process images or use images as inputs. These examples use this low-res black-and-white image of Earth.

Read Pixels Example 1

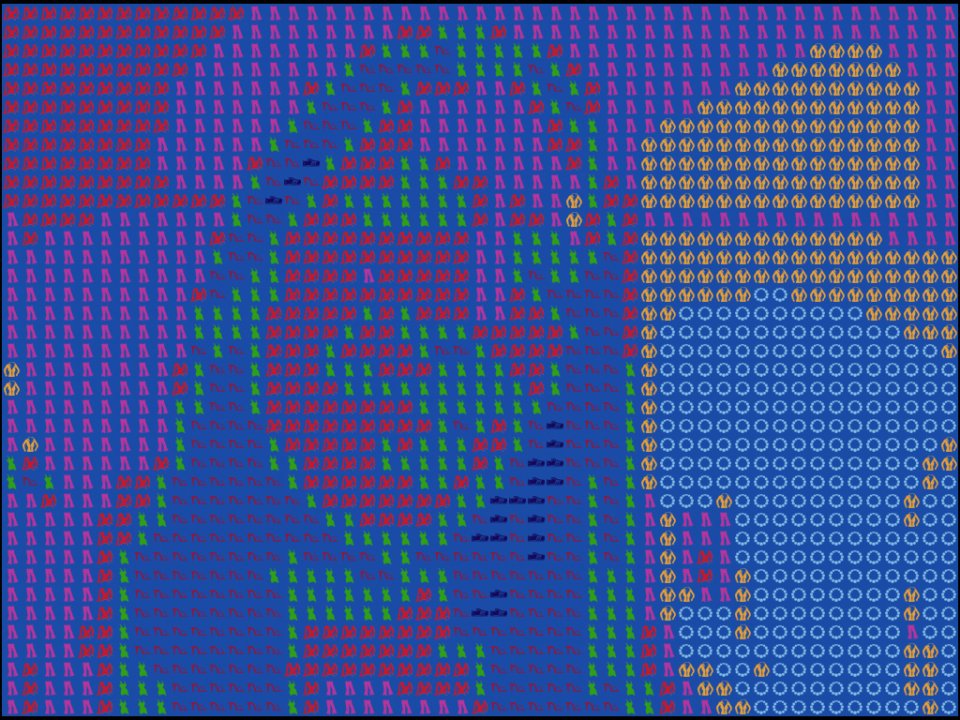

This example loads the image of Earth, loops over its pixels, and white pixels to red and black pixels to blue.

First we need to load an image to read pixel data from.

Line 3: Declare a variable to hold our image.

Line 5: The preload() function. Use this function to load assets. p5.js will wait until all assets are loaded before calling setup() and draw()

Line 6: Load the image.

With our image loaded we can process the pixels.

Line 16: Set up a nested loop to cover every pixel.

Line 18: Use get() to load the color data of the current pixel. get() returns an array like [255, 0, 0, 255] with components for red, green, blue, and alpha.

Lines 22: Read the red component of the color. We could also access directly like this: in_color[0]

Line 25: Check if the red value is 255 to see if it is black or white. Since we know the image is only black and white this is enough to check.

Line 26 and Line 28: Set out_color to red or blue.

Line 31: Set the pixel’s color to out_color.

Line 35: Use updatePixels() to tell the image that we have updated the pixel array.

Read Pixels Example 2

This example compares each pixel to the one below it. If the upper pixel is darker, it is changed to magenta.

Image as Input Example

This example doesn’t draw the image at all. Instead, the image is used as an input that controls where the red ellipses are drawn. Using images as inputs is a powerful technique that allows you to mix manual art and procedurally-generated content.

Coding Challenges Two

Explore this chapter’s example code by completing the following challenges.

Modify Read Pixels Example 1

- Make the program turn white pixels green.

• - Turn the black pixels to a random shade of red.

• - Turn the black pixels into a vertical, black-to-red gradient.

•• - Comment out line 34 which calls

updatePixels(). What happens?••

Modify Read Pixels Example 2

- Change the

lightness()comparison to>.• - Change the

lightness()comparison to!=.• - Add an

elseblock that changes the pixels to black.•• - Starting with the original code without any changes, set the

out_colorto the average ofthis_colorandbelow_color. Here is an example you could follow:•••

var color_a = color(worldImage.get(0, 1));

var color_b = color(worldImage.get(0, 2));

var blended_color = lerpColor(color_a, color_b, 0.5);- Change

worldImage.set(x, y, out_color);toworldImage.set(x, y+1, out_color);.••• - Remove the

ifstatement (but not its contents) so that its content always runs.•••

Modify Read Pixels Example 3

- Tell the program to use the image below by switching which

loadImage()call is commented out inpreload().• - Adjust the expression that determines

dot_sizeto make the result prettier.••

Working Directly with the pixels[] Array

You can read and write individual pixel values with the get() and set() methods. These methods are easy to use, but they are really slow. A faster approach is to use loadPixels() and updatePixels() to copy the canvas or image data to and from the pixels[] array. Then, with a little bit of math, you can work directly with the pixels[] array data. This is a little more work but can run hundreds of times faster.

We can get much faster results by loading all of the pixel values with loadPixels(), and then reading and writing the pixels[] array directly. Since we are reading from pixels[] ourselves, we can bypass the safety measures that slow down get() like bounds checking. We have to be a little more careful about what we are doing, though, or we might create bugs.

The getQuick() and setQuick() functions below read and write a pixel’s color value from an image’s pixels[] array. You must call loadPixels() before calling these functions. When you are done working with the pixels[] array, you should call updatePixels() to update the image with your changes.

// getQuick()

// find the RGBA values of the pixel at `x`, `y` in the pixel array of `img`

// unlike get() this functions only supports getting a single pixel

// it also doesn't do any bounds checking or other checks

//

// we don't need to worry about screen pixel density here, because we are

// not reading from the screen, just an image

function getQuick(img, x, y) {

const i = (y * img.width + x) * 4;

return [

img.pixels[i],

img.pixels[i + 1],

img.pixels[i + 2],

img.pixels[i + 3],

];

}

// setQuick()

// set the RGBA values of the pixel at `x`, `y` in the pixel array of `img`

// unlike set() this functions only supports setting a single pixel

// it doesn't work with a p5 color object

// it also doesn't do any bounds checking or other checks

function setQuick(img, x, y, c) {

const i = (y * img.width + x) * 4;

img.pixels[i + 0] = c[0];

img.pixels[i + 1] = c[1];

img.pixels[i + 2] = c[2];

img.pixels[i + 3] = c[3];

}Copy the getQuick() function above into your sketch. You can then replace a built-in p5 get call with a call to getQuick:

Using get()

// in loop

c = img.get(x, y);Using getQuick()

// before loop

img.loadPixels();

// in loop

c = getQuick(img, x, y);

// after loop

img.updatePixels();get() vs getQuick()

The following example compares the performance of using get() and set() with getQuick() and setQuick() to read and invert the color values in a small image. On my machine, using get() takes about 30 times longer than getQuick() for small images and about 250 times longer for large images.

The Canvas + Pixel Density

You can work with the pixels in an image using image.pixels[] or the pixels of the canvas with just pixels[].

When accessing the pixel data of the canvas itself, you need to consider the pixel density p5 is using. By default, p5 will create a high-dpi canvas when running on a high-dpi (retina) display. You can call pixelDensity(1) before creating your canvas to disable this feature. Otherwise, you’ll need to take into account the density when calculating a position in the pixels[] array.

The examples on this page work with the pixels of images instead of the canvas to avoid this issue altogether. If you need to work with the canvas, the pixels documentation has info on working with higher pixel densities.

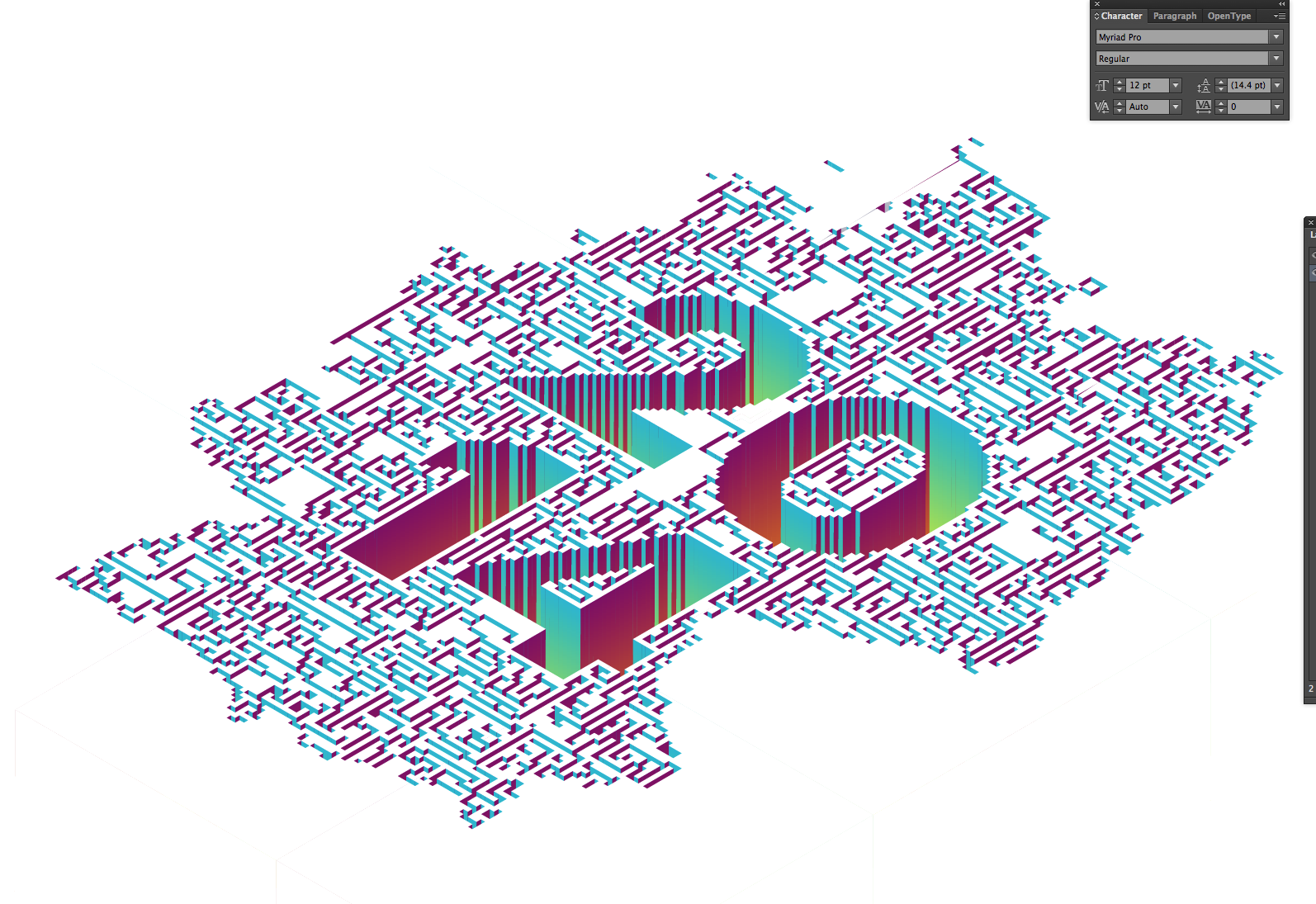

Growing Grass

This example uses an image as an input to control the density and placement of drawn grass.

Input Image

Keep Sketching!

Sketch

Explore working with image pixel data directly. Most of your sketches should be still images.

Create at least one sketch for each of the following:

- Generate an image from scratch: pixel by pixel. Don’t call any high-level drawing function like

ellipse()orrect(). - Load an image and process its pixels.

- Use an image as an input source to control a drawing. Don’t show the original image, just the output.

Challenge: Pixel Ouroboros

Create code that processes an image. Feed the result back into your code and process it again. What happens after several generations?

Pair Challenge: Generate / Process

Work with a partner.

- Make a sketch that generates an image pixel by pixel.

- Give your image to your partner.

- Create a sketch that pixel processess that image.

Explore

Reaction Diffusion in Photoshop Tutorial Create a pattern in Photoshop by repeatedly applying filters.

Factorio Game Page A game in which players gather resources to create increasingly complex technology and factories—sometimes building these structures into pixel art.

Icon Machine Generator A pixel art web app that randomly generates potion bottle icons.

Memory-Mapped Video: The Scanning Game Technical Article An article on ASCII encoding and storage, part of a 1979 primer on computer graphics.